TrustLLM celebrates a year of innovation

As we wrap up the first year of the TrustLLM project, it’s incredible to see how far we’ve come. Our journey has been marked by significant milestones, from securing substantial HPC resources to advancing our data curation efforts.

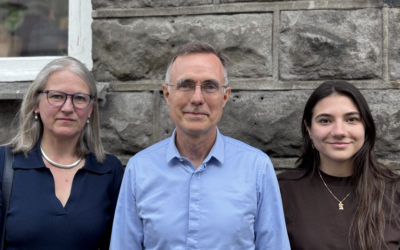

In an interview, Professor Fredrik Heintz shared his thoughts on what makes the TrustLLM project unique:

“We are not only a traditional research project focused on conducting new and fascinating research, but we also aim to train, evaluate, and test models in both benchmarks and real-world applications. The real value of our project lies in how we connect and integrate these different parts.”

One of our key achievements this year was securing 77,500 node hours on the MareNostrum cluster, which allowed us to train our baseline model. This was a significant milestone, despite the challenges we faced with resource management and training efficiency.

Data curation has been another critical focus. Leveraging data from various projects and developing an initial data management plan has taught us valuable lessons about the complexities of acquiring and curating high-quality, trustworthy data.

Looking ahead, our goal remains to curate increasing amounts of high-quality data, secure more HPC capacity, and integrate our research into the models we train. We are committed to documenting our lessons learned and sharing our insights with the broader community.

As we celebrate this milestone, we want to thank everyone involved for their hard work and dedication. Here’s to another year of innovation and progress!