5 February, 12:00-13:00 CET

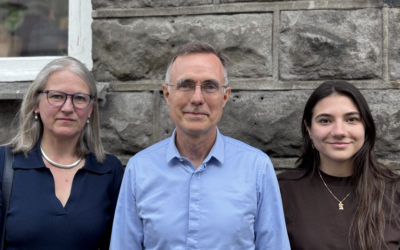

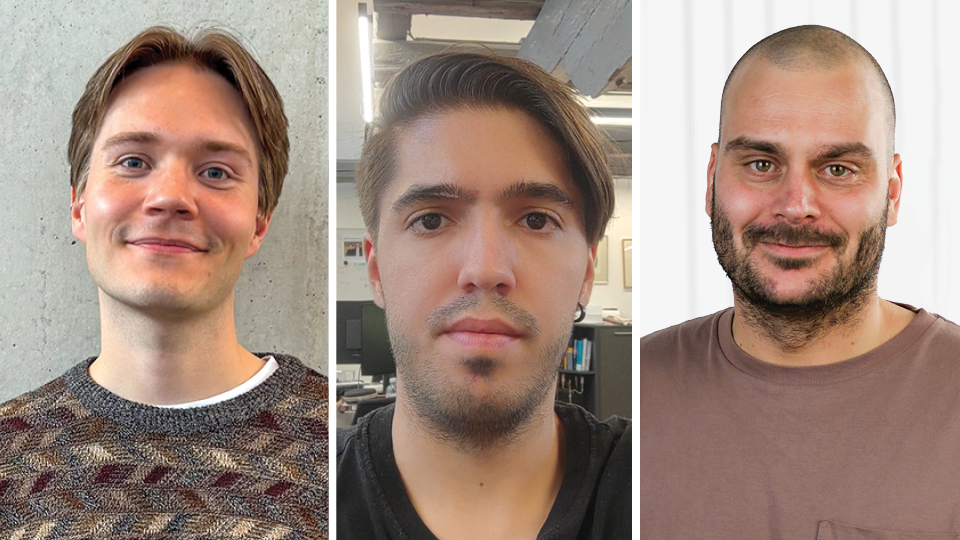

Garðar Ingvarsson Juto (Miðeind), Ilker Kesen (University of Copenhagen), Andreas Holm (Alexandra Institute).

How do LLMs actually learn new languages? How can LLMs learn small languages like Faroese better? Can LLMs learn languages from images rather than text?

In this three-part TrustLLM webinar, we will look at one of the most overlooked parts of LLM training: tokenization. What is often treated as a preprocessing step shapes how a model learns and how it handles languages with very different amounts of training data:

What if we want to teach an LLM an entirely new language? What if we just swap its vocabulary? In part 1 of the webinar, we will walk through how, instead of retraining the entire model, we can keep the Transformer backbone and just help it learn new words while keeping its old behavior the same.

Faroese is a small language that is very different from English. In common tokenization strategies, vocabularies for one specific language can isolate the language and prevent it from benefiting from high-resource languages like English. In part 2 of the webinar, we will go through how we can break that isolation using dynamic tokenization.

What if we don’t use regular tokenization at all? In part 3 of the webinar, we will show how Pixel models learn languages from visual patterns in characters rather than from sentences. It solves problems like out-of-vocabulary words, but it comes with its own challenges.

The webinar will be presented by Garðar Ingvarsson Juto (Miðeind), Ilker Kesen (University of Copenhagen), Andreas Holm (Alexandra Institute).

Please note that this webinar will be recorded.